with a Claude’s help

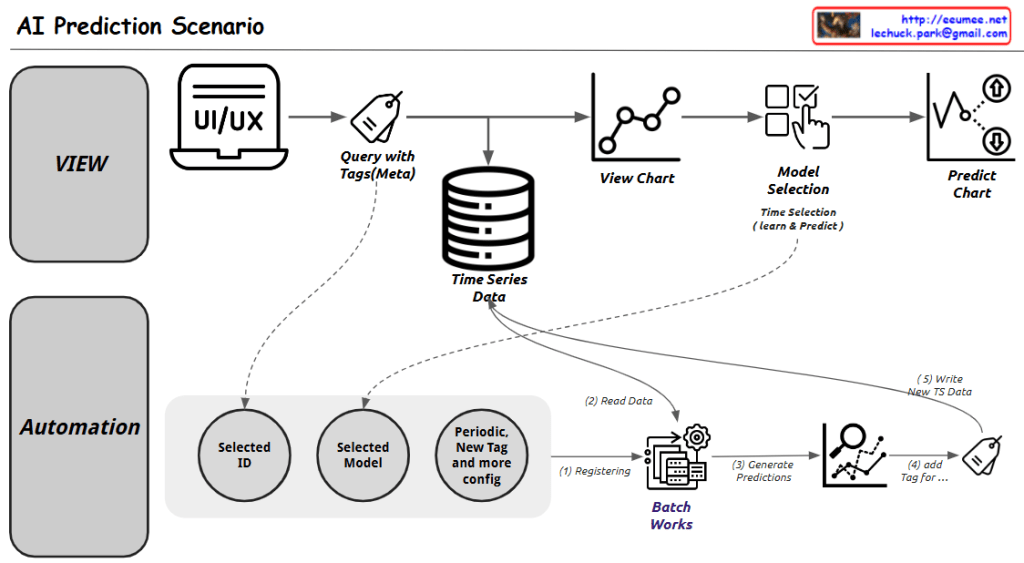

This image provides an overview of different time series prediction methods, including their characteristics and applications. The key points are:

ARIMA (Autoregressive Integrated Moving Average):

- Suitable for linear, stable datasets where interpretability is important

- Can be used for short-term stock price prediction and monthly energy consumption forecasting

Prophet:

- A quick and simple forecasting method with clear seasonality and trend

- Suitable for social media traffic and retail sales predictions

LSTM (Long Short-Term Memory):

- Suitable for dealing with nonlinear, complex, large-scale, feature-rich datasets

- Can be used for sensor data anomaly detection, weather forecasting, and long-term financial market prediction

Application in a data center context:

- ARIMA: Can be used to predict short-term changes in server room temperature and power consumption

- Prophet: Can be used to forecast daily, weekly, and monthly power usage patterns

- LSTM: Can be used to analyze complex sensor data patterns and make long-term predictions

Utilizing these prediction models can contribute to energy efficiency improvements and proactive maintenance in data centers. When selecting a prediction method, one should consider the characteristics of the data and the specific forecasting requirements.