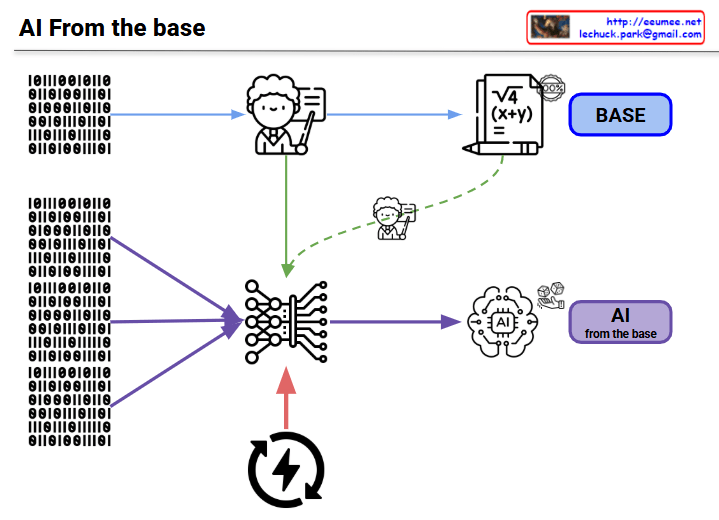

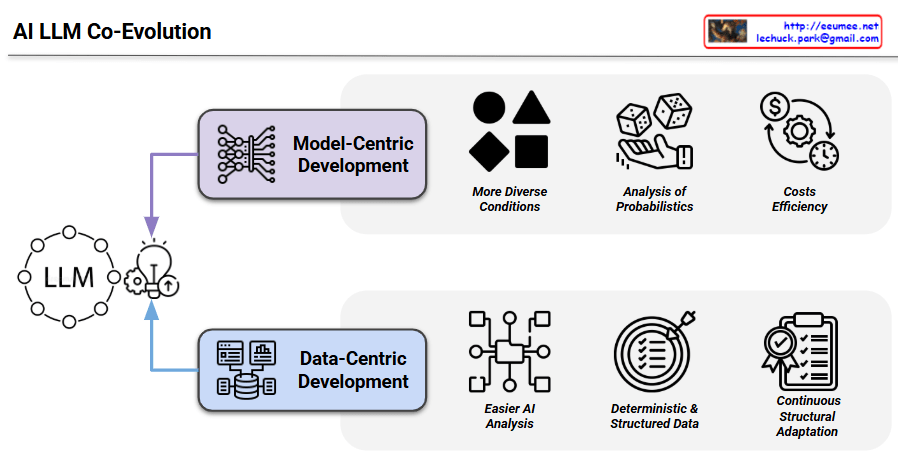

This image illustrates the AI LLM Co-Evolution process, showing how Large Language Models develop through two complementary approaches.

The diagram centers around LLM with two main development pathways:

1. Model-Centric Development

- More Diverse Conditions: Handling various data types and scenarios (represented by geometric shapes)

- Analysis of Probabilities: Probabilistic approaches to model behavior (shown with dice icons)

- Costs Efficiency: Economic optimization in model development (depicted with dollar sign and gear)

2. Data-Centric Development

- Easier AI Analysis: Simplified analytical processes (represented by network diagrams)

- Deterministic Data: Predictable and structured data patterns (shown with concentric circles)

- Continuous Structural Adaptation: Ongoing improvements and adjustments (illustrated with checklist icon)

The diagram demonstrates that modern AI development requires both model-focused and data-focused approaches to work synergistically. Each pathway offers distinct advantages:

- Model-centric focuses on architectural improvements, probabilistic reasoning, and computational efficiency

- Data-centric emphasizes data quality, deterministic processes, and adaptive structures

This co-evolutionary framework suggests that the most effective LLM development occurs when both approaches are integrated, allowing for comprehensive advancement in AI system capabilities through their complementary strengths.

With Claude