Keep the world

The Computing for the Fair Human Life.

From Claude with some prompting

RTT is measured by sending a packet (SEQ=A) and receiving an acknowledgment (ACK), providing insights into network latency. Bandwidth is measured by sending a sequence of packets (SEQ A to Z) and observing the amount of data transferred based on the acknowledgment of the last packet.

This image explains how to measure round-trip time (RTT) and bandwidth utilization to control and optimize TCP (Transmission Control Protocol) communications. The measured metrics are leveraged by various mechanisms to improve the reliability and efficiency of TCP.

These measured metrics are utilized by several mechanisms to enhance TCP performance. TCP Timeout sets appropriate timeout values by considering RTT variation. TIMELY provides delay information to the transport layer based on RTT measurements.

Furthermore, TCP BBR (Bottleneck Bandwidth and Round-trip propagation time) models the bottleneck bandwidth and RTT propagation time to determine the optimal sending rate according to network conditions.

In summary, this image illustrates how measuring RTT and bandwidth serves as the foundation for various mechanisms that improve the reliability and efficiency of the TCP protocol by adapting to real-time network conditions.

From Claude with some prompting

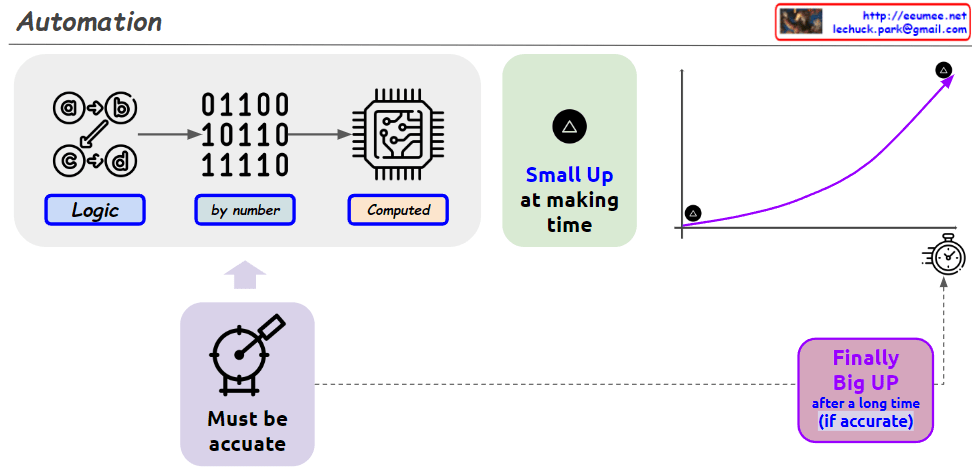

This image visually illustrates the automation process and emphasizes its long-term potential and impact. While automation may appear to be a small improvement at the present moment, the image highlights that with an accurate and systematic configuration, continuous utilization of automation over an extended period can lead to significant growth and advancement.

Initially, the computed output exhibits a gradual upward curve labeled “Small Up at making time.” However, as indicated by “Must be accurate,” precision is a prerequisite for realizing the full potential of automation. If accuracy is ensured, the sharp upward trend depicted as “Finally Big UP after a long time (if accurate)” can be achieved over the long run.

Therefore, the image suggests that although automation may seem like a small step currently, with precise and sustained implementation, it has the potential to yield substantial gains and achievements over time.

From Claude with some prompting

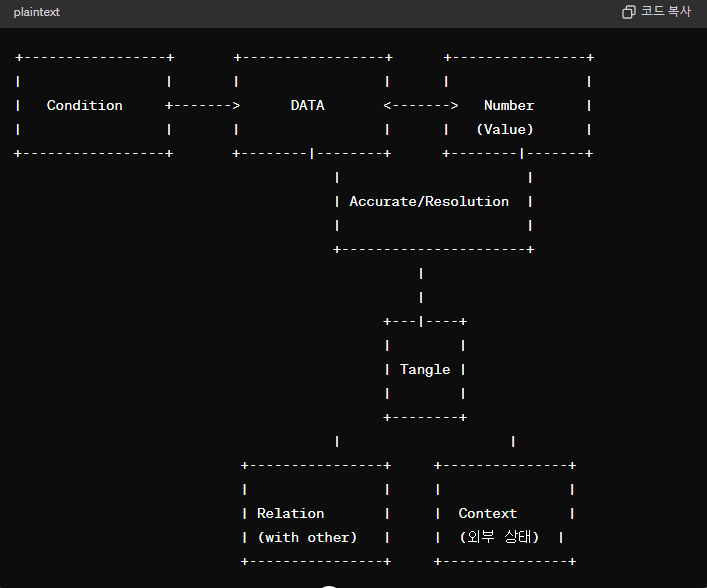

This image presents a comprehensive definition of data that goes beyond just numerical values. To clearly understand data, several elements must be considered.

First, the accuracy and resolution of the data itself are crucial. The “Number (Value)” represents numerical values that must be precise and have an appropriate level of resolution.

Second, data is closely related to external factors. “Condition” indicates a relationship with the state or condition of other data, while “Relation with other” suggests interconnectedness with other data sets.

Third, “Tangle” illustrates that data is not merely a simple number but is complexly intertwined with various elements. To clearly define data, these intricate interconnections and interdependencies must be accounted for.

In essence, the image presents a definition of data that encompasses accuracy, resolution, relationships with external conditions, and intricate interconnectedness. It emphasizes that to truly grasp the nature of data, one must comprehensively consider all these aspects.

The image underscores that data cannot be reduced to just numeric values; rather, it is a multifaceted concept intricately tied to precision, granularity, external factors, and interdependent relationships. Fully understanding data requires a holistic examination of all these interlinked elements.

Updated by GPT-4o

From Claude with some prompting

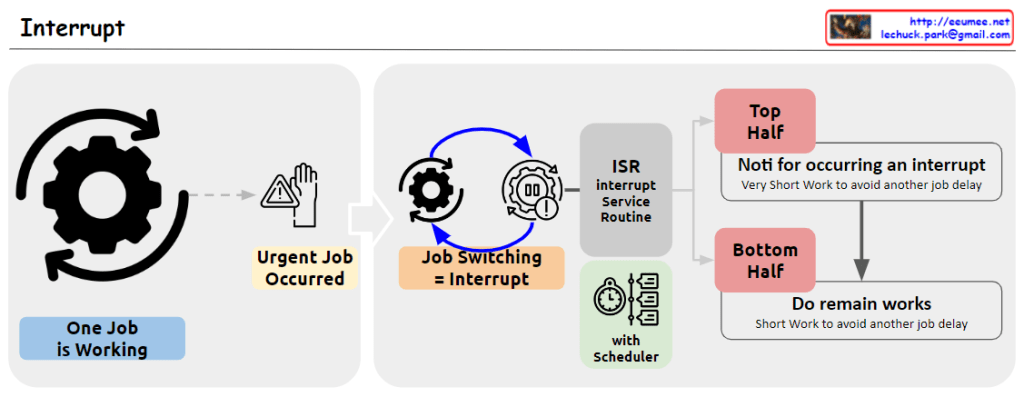

The image illustrates the process of handling interrupts in a computer system. When an urgent job (Urgent Job Occurred) arises while another job (One Job is Working) is executing, an interrupt (Job Switching = Interrupt) occurs. This triggers the Interrupt Service Routine (ISR) to handle the interrupt.

The interrupt handling process is divided into two halves: the Top Half and the Bottom Half. The Top Half performs a “Very Short Work to avoid another job delay” and notifies the system of the interrupt occurrence. The Bottom Half handles the remaining work, also performing “Short Work to avoid another job delay.”

From Claude with some prompting

The image metaphorically connects the process of evolution in the universe with the development stages of AI. After the Big Bang and the subsequent increase in entropy (disorder), life forms evolved through a process of self-organization, creating complex and ordered structures, thereby continuously decreasing entropy as long as life exists in the universe. Similarly, the image suggests that data-intensive AI systems will emerge as the next evolutionary stage after humans.

However, a critical point made is that the data driving AI itself does not possess any inherent intent or purpose, unlike living organisms. Data is merely a collection of information without any intrinsic goals or consciousness. Therefore, it is crucial to imbue the data with appropriate values and ethical principles to prevent AI from spiraling out of human control and indiscriminately increasing entropy.

Ultimately, this image emphasizes the importance of human-centric, value-driven AI development. Rather than warning against AI technology itself, it cautions against the unbridled advancement of data-driven AI systems without proper oversight and ethical frameworks in place, as imposed by humans.

Furthermore, the image implies that while life and AI may continue to evolve, decreasing entropy in the process, they will ultimately succumb to the universal law of increasing entropy and reach a state of thermodynamic equilibrium.

In essence, the image thoughtfully juxtaposes scientific concepts of entropy, evolution, and the emergence of AI, highlighting the need for responsible and value-aligned AI development under human guidance, while acknowledging the overarching principles of entropy and equilibrium that govern the universe.

From Claude with some prompting

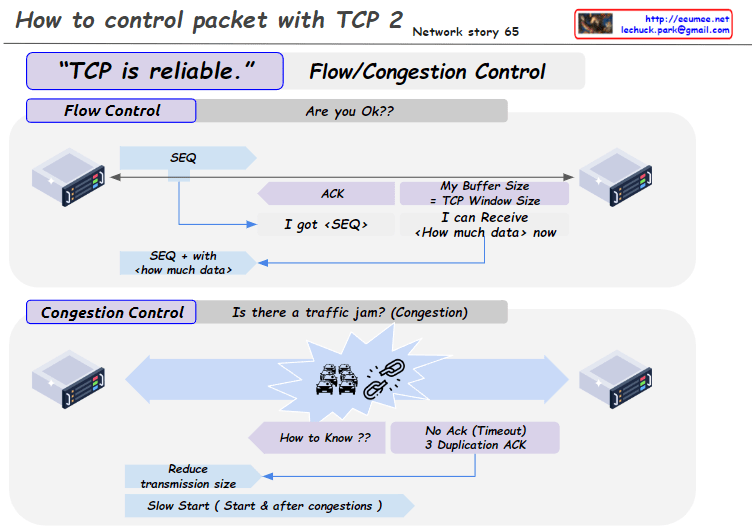

This image illustrates the flow control and congestion control mechanisms, which are examples of why TCP (Transmission Control Protocol) is considered a reliable protocol.

Therefore, flow control and congestion control are key factors that enable TCP to be regarded as a reliable transport protocol. Through these mechanisms, TCP prevents data loss, network overload, and ensures stable communication.