From Claude with some prompting

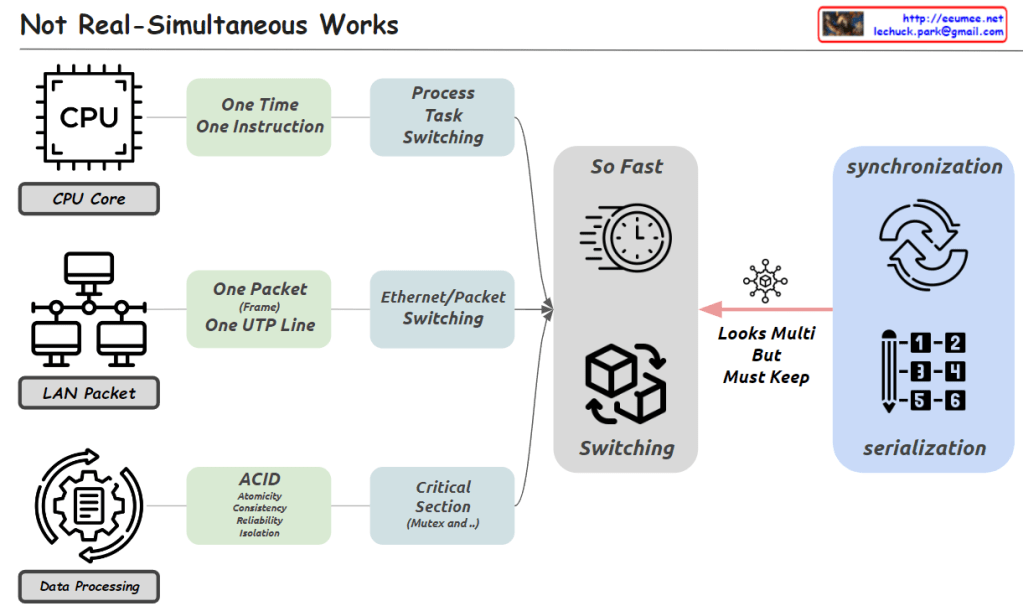

The image emphasizes that while it may appear to be simultaneous processing, it is actually very fast serial processing.

From the perspectives of the CPU, LAN, and data processing, each can only handle one unit of work at a time. The CPU can execute one instruction, the network can transmit one packet line, and in data processing, critical sections require mutual exclusion and serialization.

However, due to very fast switching techniques like process/task switching and Ethernet/packet switching, multiple tasks appear to be happening concurrently. But in reality, it is processing single units of work in rapid serial fashion.

So concurrency is achieved through fast serial processing, not parallel processing. Even so, in critical areas, synchronization and serialization are required to maintain data integrity.

In essence, the image highlights that while it looks like simultaneous processing, concurrency is actually implemented through extremely fast serial processing of single work units at a time.