From Claude with some prompting

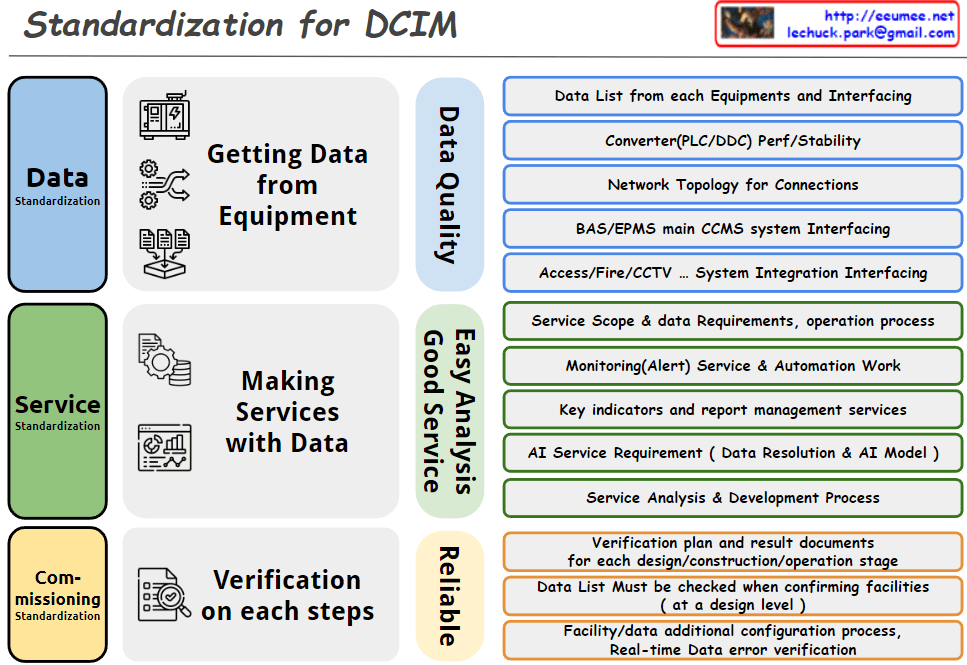

Data Standardization:

- Defined a clear process for systematically collecting data from equipment.

- Proposed an integrated data management approach, including network topology and interfacing between various systems.

- Emphasized data quality management as a key factor to establish a reliable data foundation.

Service Standardization:

- Structured the process of connecting data to actual services.

- Highlighted practical service implementation, including monitoring services and automation tasks.

- Specified AI service requirements, showing a forward-looking approach.

- Established a foundation for continuous service improvement by including service analysis and development processes.

Commissioning Standardization:

- Emphasized verification plans and documentation of results at each stage of design, construction, and operation to enable quality management throughout the entire lifecycle.

- Prepared an immediate response system for potential operational issues by including real-time data error verification.

- Considered system scalability and flexibility by incorporating processes for adding facilities and data configurations.

Overall Evaluation:

This DCIM standardization approach comprehensively addresses the core elements of data center infrastructure management. The structured process, from data collection to service delivery and continuous verification, is particularly noteworthy. By emphasizing fundamental data quality management and system stability while considering advanced technologies like AI, the approach is both practical and future-oriented. This comprehensive framework will be a valuable guideline for the implementation and operation of DCIM.