Blingee

The Computing for the Fair Human Life.

From Perplexity with some prompting

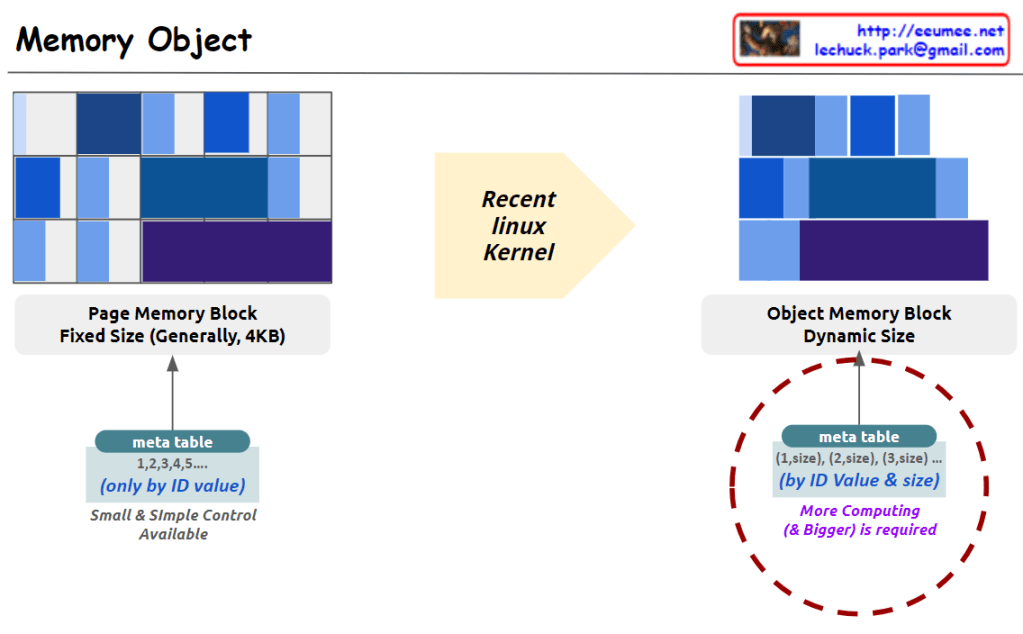

The image illustrates a change in memory object management in recent Linux kernels, focusing on the transition from fixed-size page memory blocks to dynamic-size object memory blocks.

Meta Table: Requires both ID values and sizes (e.g., (1, size), (2, size)), necessitating more complex computation and larger metadata tables.

Object Memory Block: Utilizes blocks of varying sizes to accommodate different memory object sizes.

This transition reflects a shift towards more flexible memory management, allowing for better utilization of memory resources by accommodating objects of different sizes. However, it also introduces increased complexity in managing these memory allocations.

From Claude + ChatGPT with some prompting

The image you provided shows a standardization process aimed at delivering high-quality data and consistent services. Here’s a breakdown of the structure based on the image:

The aim for both paths is to provide high-quality data and consistent service by standardizing either through optimizing legacy systems or creating entirely new setups.

This structured approach helps improve efficiency, consistency, and system performance.

From Claude with some prompting

The image is a diagram titled “Service” that illustrates two main processes:

This diagram appears to illustrate how operational processes and data/technology services interact and integrate, likely representing a data-driven operational and decision-making process.

From perplexity with some prompting

The image explains the “Golden Circle for DC Operation,” focusing on optimizing data center operations.

This structure emphasizes efficiency, standardization, and a data-centric approach to data center operations.

From Claude with some prompting

This series illustrates the progression from a simplistic average-based view, through recognition of detailed usage patterns, to peak-based optimization, and finally to flexible supply optimization that matches real-time demand. It demonstrates the evolution towards a more efficient and responsive resource management approach.

with my wife