….

The Computing for the Fair Human Life.

From Claude with some prompting

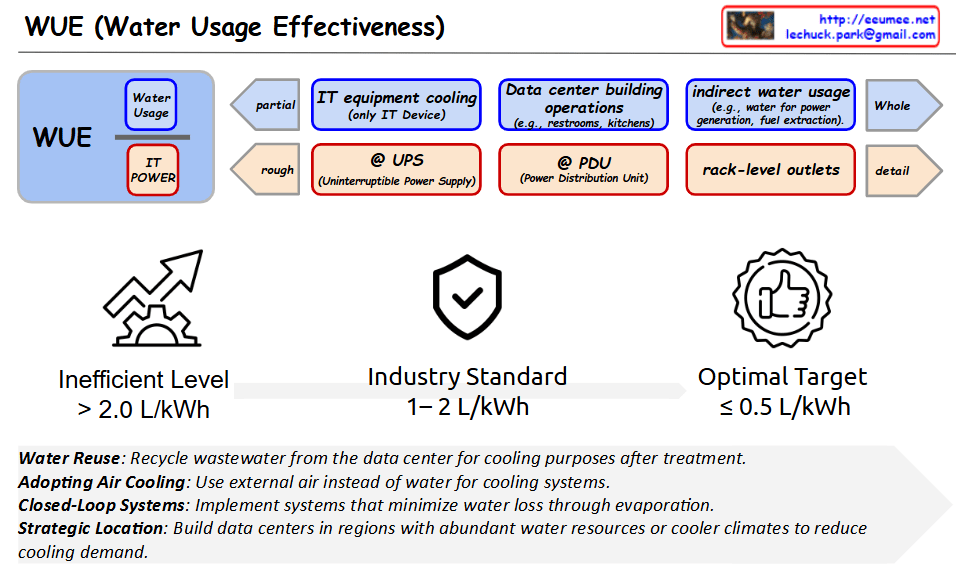

The image provides information about Water Usage Effectiveness (WUE), which is a metric used to measure the water efficiency of data centers. Here’s a breakdown of the key points:

The key update here is that the WUE calculation can use either the IT Power component or the Water Usage component, but not both simultaneously. Please let me know if I can clarify or expand on any part of this explanation.

From Claude with some prompting

detailed explanation of the PUE levels :

The key differences between the levels are in terms of cost, precision, and the scope of data collected. Organizations can choose the appropriate PUE measurement level based on their operational goals and budget.

Bringing a digital “1” from the real world is far from simple.

Ensuring and maintaining a digital “1” involves numerous challenges and complexities.

with ChatGPT

With ChatGPT

From Claude with some prompting

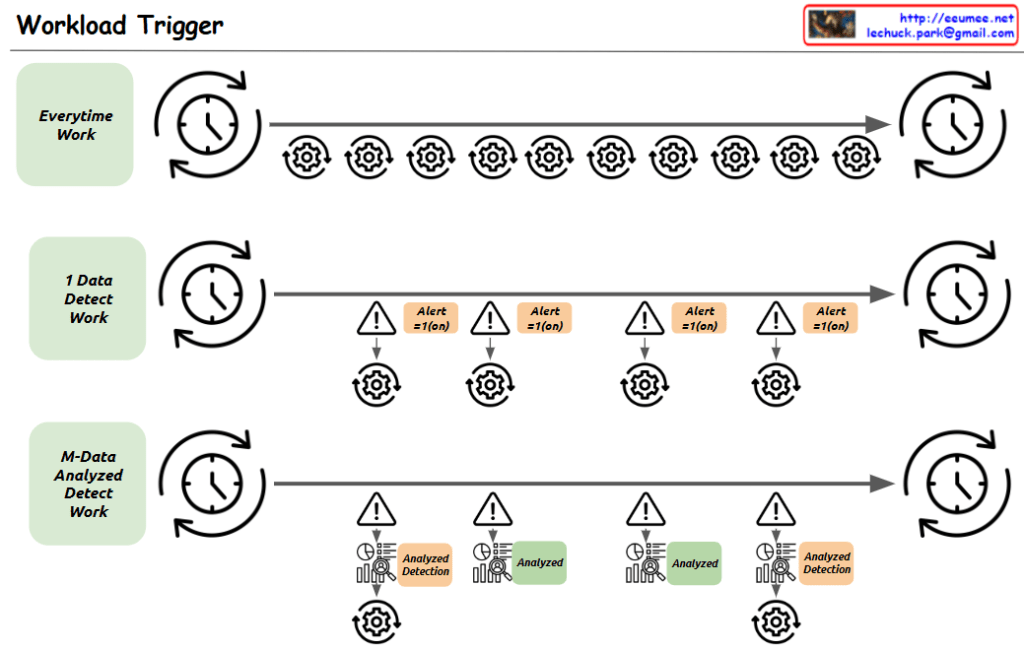

The image appears to be a diagram illustrating a “Workload Trigger” process. It shows three main stages of work:

The overall flow of the diagram moves from left to right, with the work cycling through the three main stages. The timing of the work cycles is indicated by the clocks at the start and end of each stage.

The diagram seems to be illustrating some kind of automated monitoring or analysis workflow that triggers alerts based on the detection of certain data patterns or conditions.

From Claude with some prompting

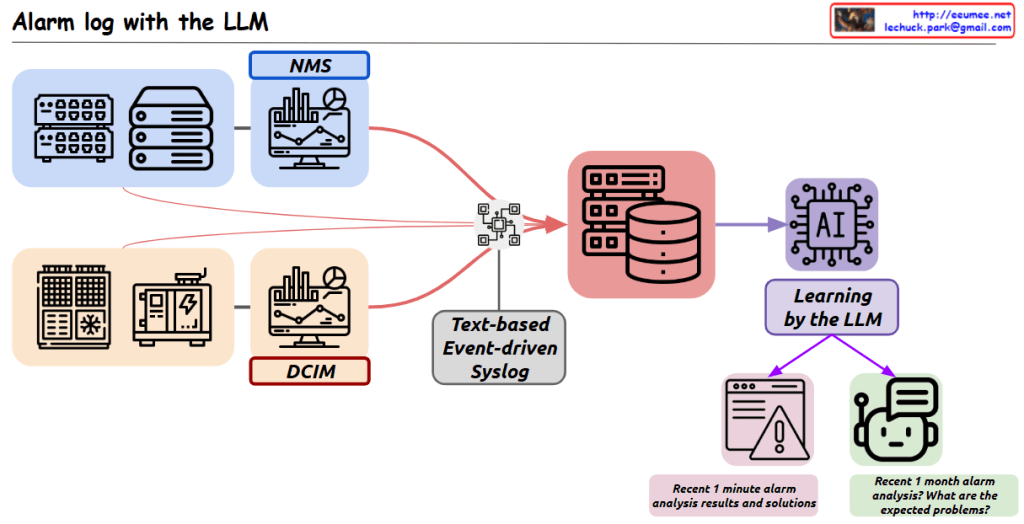

This image represents an “Alarm log with the LLM” system. The key components and functionality are as follows:

Overall, this system can provide powerful alarm management capabilities through real-time monitoring and predictive analysis.