The Computing for the Fair Human Life.

With Claude

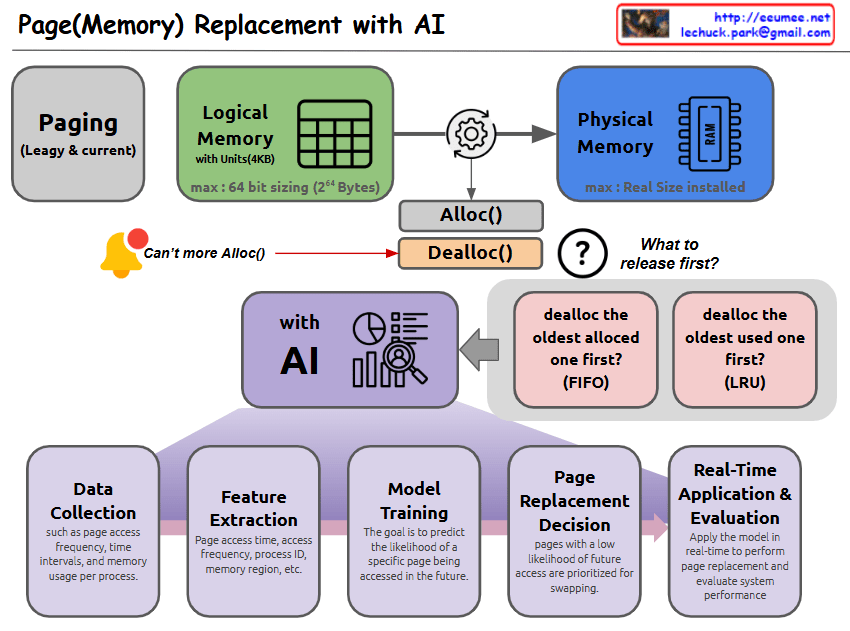

This image illustrates a Page (Memory) Replacement system using AI. Let me break down the key components:

This system integrates traditional page replacement algorithms with AI technology to achieve more efficient memory management. The use of AI helps in making more intelligent decisions about which pages to keep in memory and which to swap out, based on learned patterns and predictions.

With Claude

this image that shows the evolution of data analysis and its characteristics at each stage:

Analysis Evolution:

Bottom Indicators’ Changes:

This diagram illustrates the trade-offs in the evolution of data analysis. As analysis methods progress from simple one-dimensional analysis to complex ML/DL, while the sophistication and complexity of analysis increase, there’s a decrease in comprehensibility and ease of implementation. It shows how more advanced analysis techniques, while powerful, require greater expertise and may be less transparent in their decision-making processes.

The progression also demonstrates how modern analysis methods can handle increasingly complex data but at the cost of reduced explainability and the need for specialized knowledge to implement them effectively.

With Claude

TIMELY (Transport Informed by MEasurement of LatencY)

The TIMELY system demonstrates an efficient approach to network congestion control using RTT measurements, particularly suitable for cloud and data center environments where latency and efficient data transmission are critical. The system’s kernel-level implementation and MSS-based adjustments provide fine-grained control over network traffic, though success heavily depends on accurate RTT measurements and proper environment calibration.

With Claude

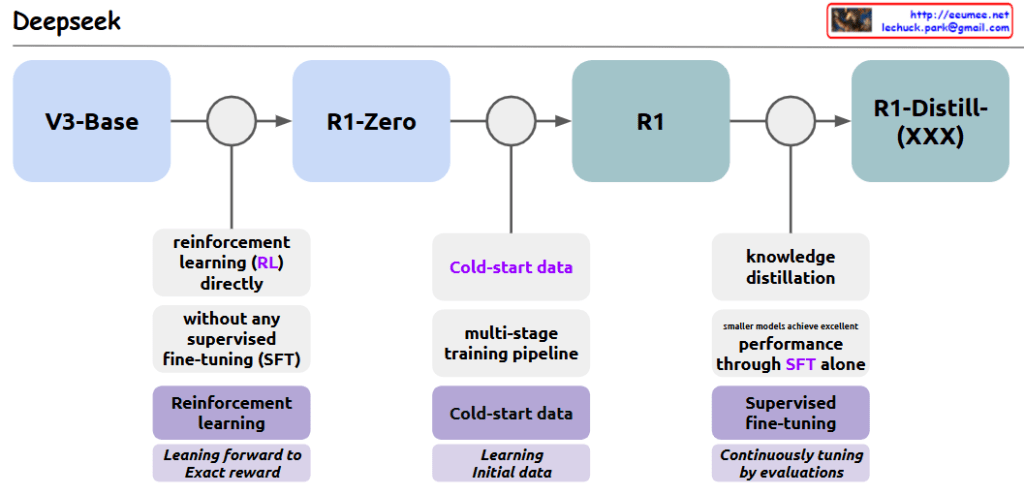

The evolution pipeline of the Deepseek model consists of three major stages:

This pipeline demonstrates a comprehensive approach to model development, incorporating various advanced AI training techniques and methodologies to achieve optimal performance at each stage.

With Claude

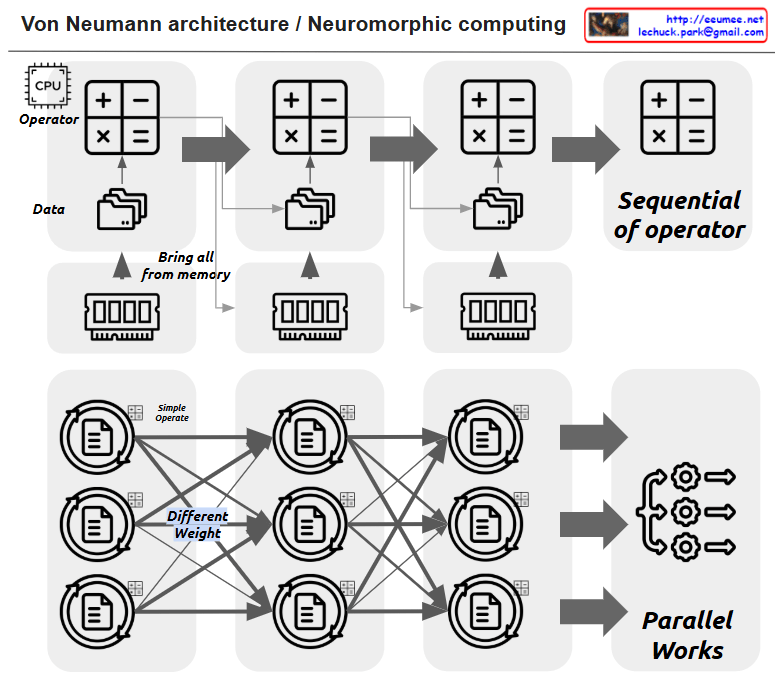

This image illustrates the comparison between Von Neumann architecture and Neuromorphic computing.

The upper section shows the traditional Von Neumann architecture:

The lower section demonstrates Neuromorphic computing:

Key differences between these architectures:

The main advantage of Neuromorphic computing is that it provides a more efficient architecture for artificial intelligence and machine learning tasks by mimicking the biological neural networks found in nature. This parallel processing approach can handle complex computational tasks more efficiently than traditional sequential processing in certain applications.

The image effectively contrasts how data flows and is processed in these two distinct computing paradigms – the linear, sequential nature of Von Neumann versus the parallel, interconnected nature of Neuromorphic computing.