With Claude

Here’s the analysis of the image and key elements :

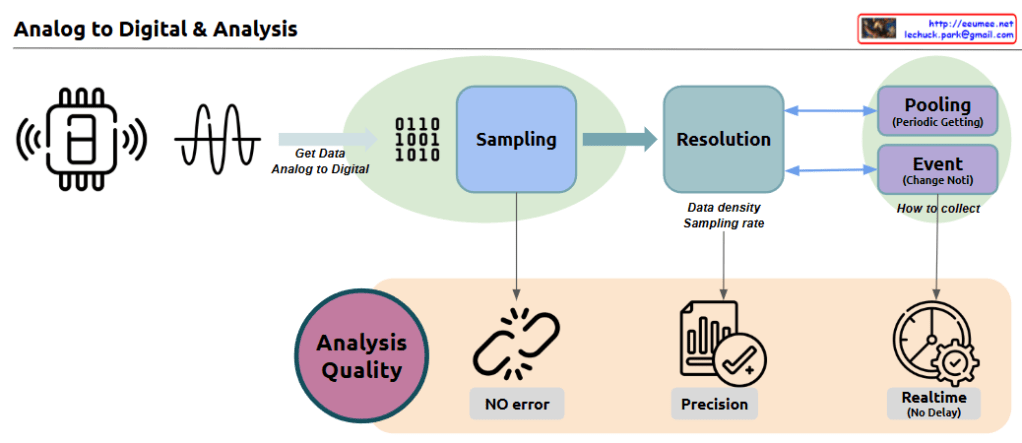

- Sampling Stage

- Initial stage of converting analog signals to digital values

- Converts analog waveforms from sensors into digital data (0110 1001 1010)

- Critical first step that determines data quality

- Foundation for all subsequent processing

- Resolution Stage

- Determines data quality through Data density and Sampling rate

- Direct impact on data precision and accuracy

- Establishes the foundation for data quality in subsequent analysis

- Controls the granularity of digital conversion

- How to Collect

- Pooling: Collecting data at predetermined periodic intervals

- Event: Data collection triggered by detected changes

- Provides efficient data collection strategies based on specific needs

- Enables flexible data gathering approaches

- Analysis Quality

- NO error: Ensures error-free data processing

- Precision: Maintains high accuracy in data analysis

- Realtime: Guarantees real-time processing capability

- Comprehensive quality control throughout the process

Key Importance in Data Collection/Analysis:

- Accuracy: Essential for reliable data-driven decision making. The quality of input data directly affects the validity of results and conclusions.

- Real-time Processing: Critical for immediate response and monitoring, enabling quick decisions and timely interventions when needed.

- Efficiency: Proper selection of collection methods ensures optimal resource utilization and cost-effective data management.

- Quality Control: Consistent quality maintenance throughout the entire process determines the reliability of analytical results.

These elements work together to enable reliable data-driven decision-making and analysis. The success of any data analysis system depends on the careful implementation and monitoring of each component, from initial sampling to final analysis. When properly integrated, these components create a robust framework for accurate, efficient, and reliable data processing and analysis.