From DALL-E with some prompting

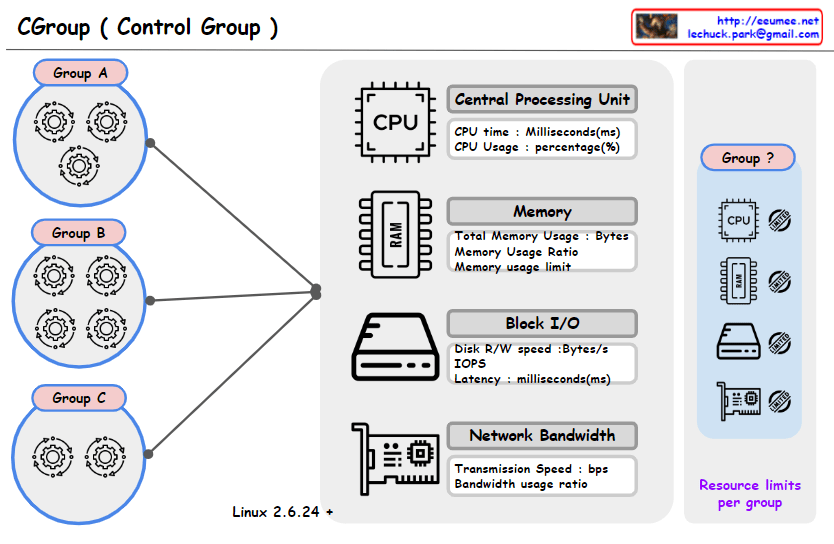

This image represents a concept diagram for ‘Control Groups’ (Cgroups) used in the Linux operating system. Cgroups provide the capability to manage and limit system resource usage for groups of processes. Each control group can have limits set for various resources such as CPU, memory, block I/O, and network bandwidth.

Groups A, B, C: Each circle represents a separate control group, and the gear icons within each group symbolize the processes assigned to that group.

The central graphical elements represent various system resources:

CPU: Represents CPU time and usage (milliseconds, percentage).

Memory (RAM): Shows total memory usage, memory usage ratio, and memory usage limit.

Block I/O: Illustrates disk read/write speed, number of input/output operations per second (IOPS), and latency.

Network Bandwidth: Displays transmission speed and bandwidth usage ratio.

In the upper right, there’s a section with the text “Resource limits per group” alongside icons for each resource and a question-marked group. This likely illustrates the resource limitations that can be set for each control group.

At the bottom, “Linux 2.6.24 +” indicates that the Cgroups feature is available from Linux kernel version 2.6.24 onwards.

Overall, the image seems to have been created to explain the concept of Cgroups and how resources can be managed for different groups within a system.